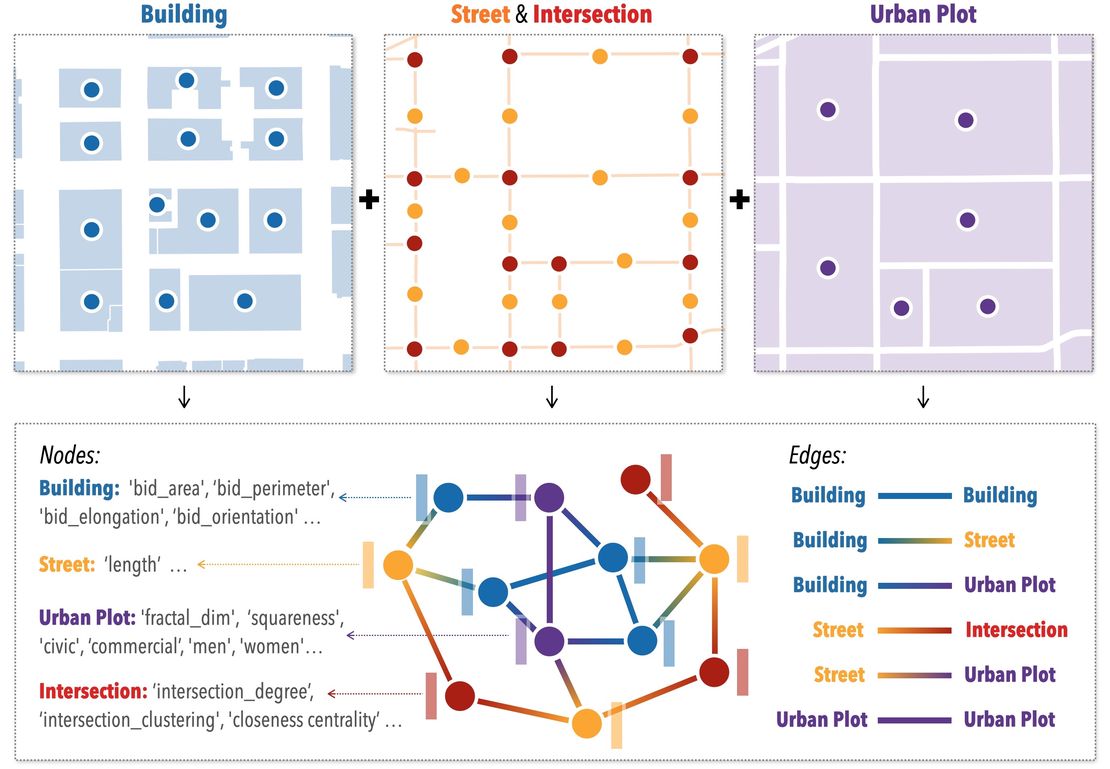

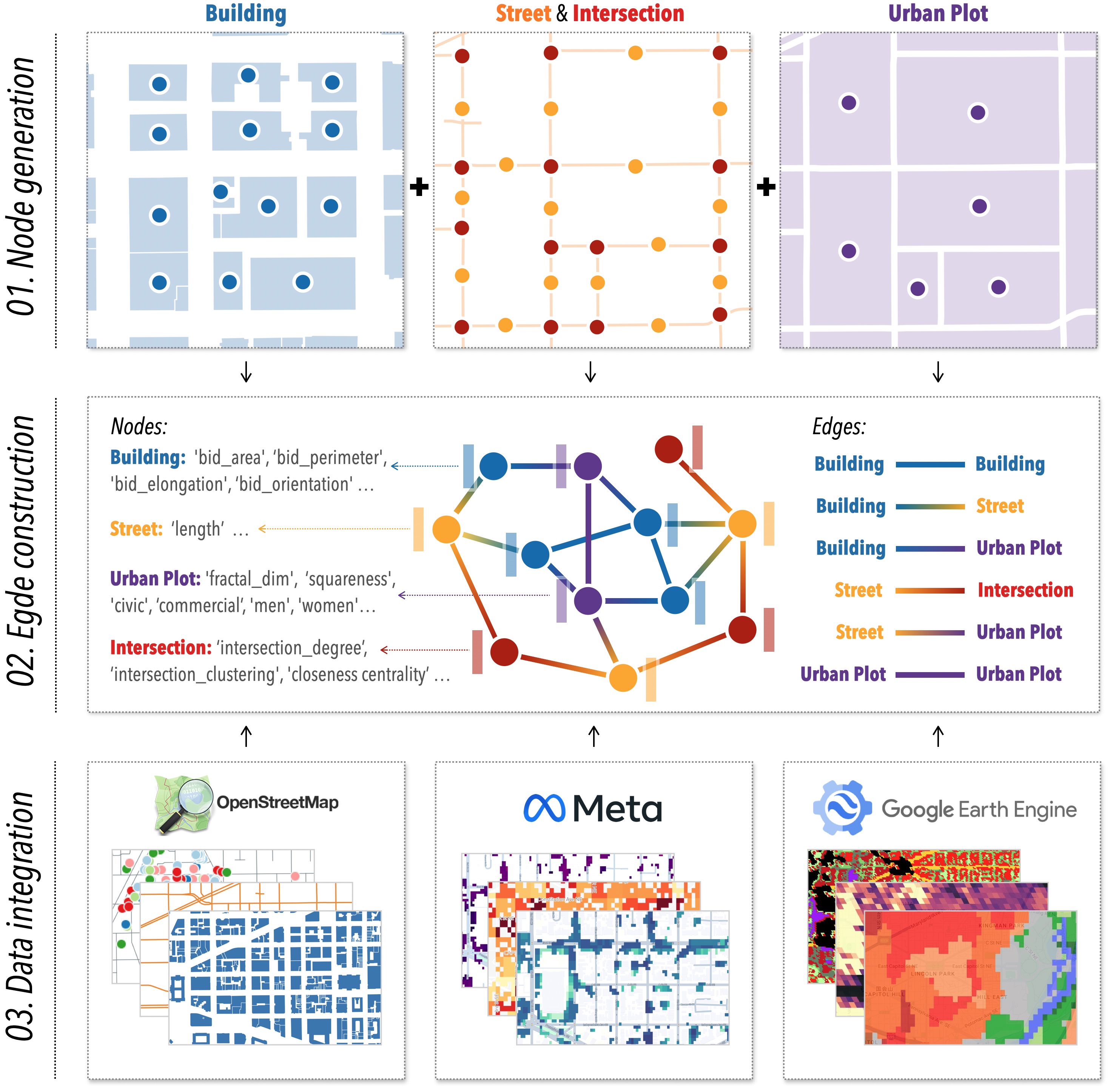

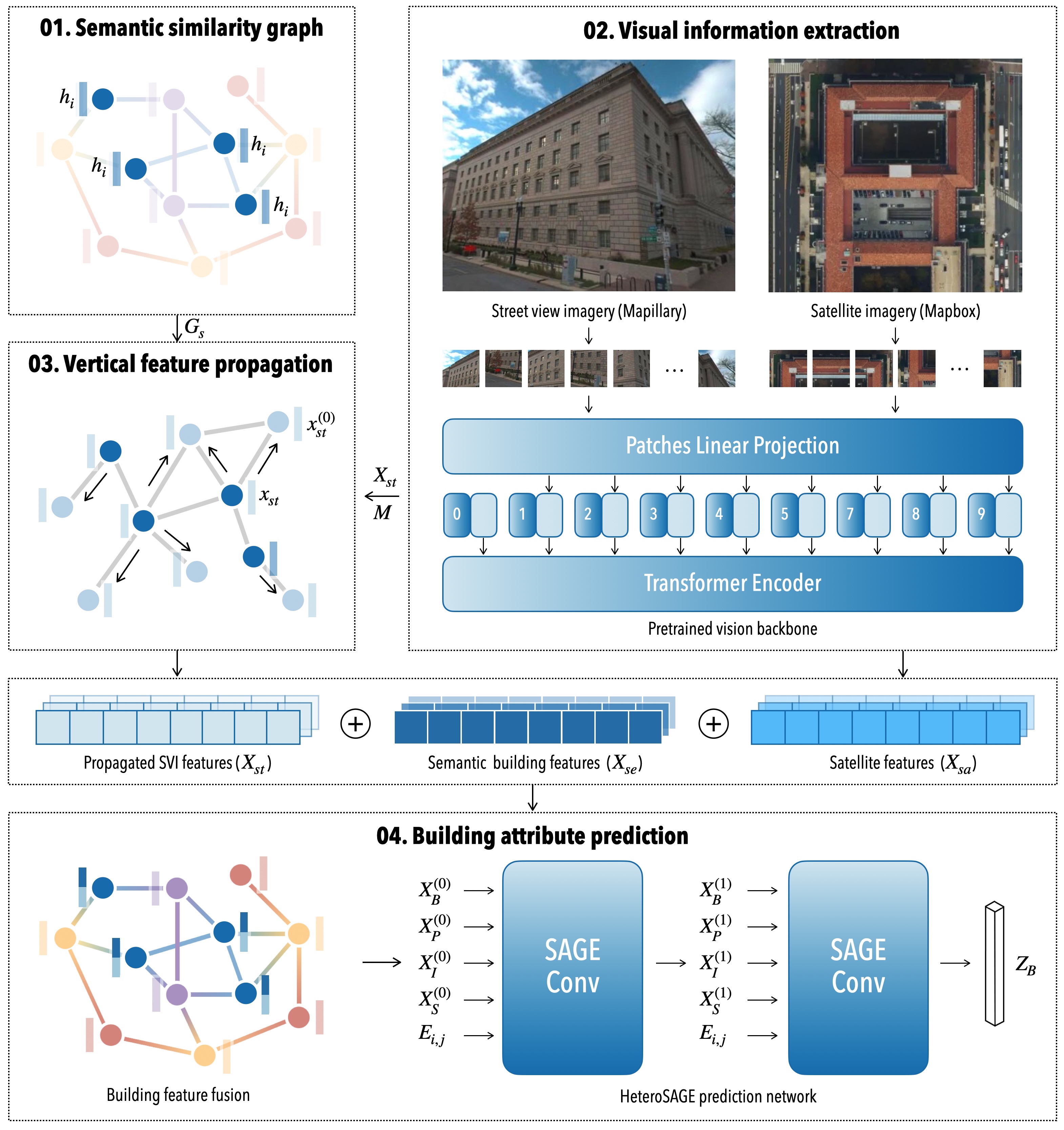

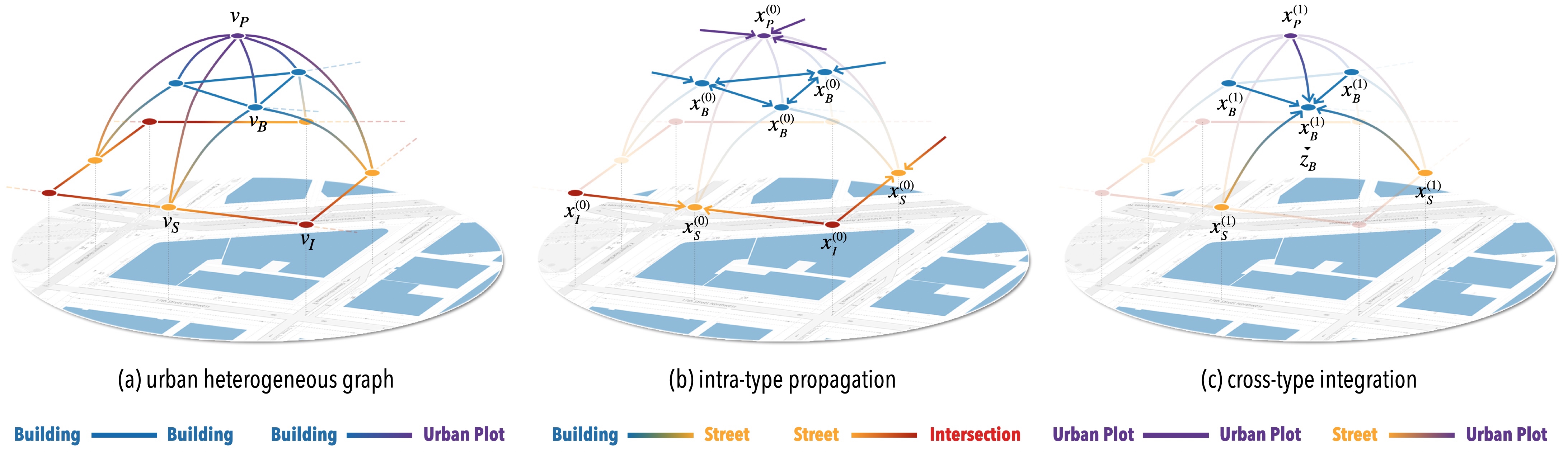

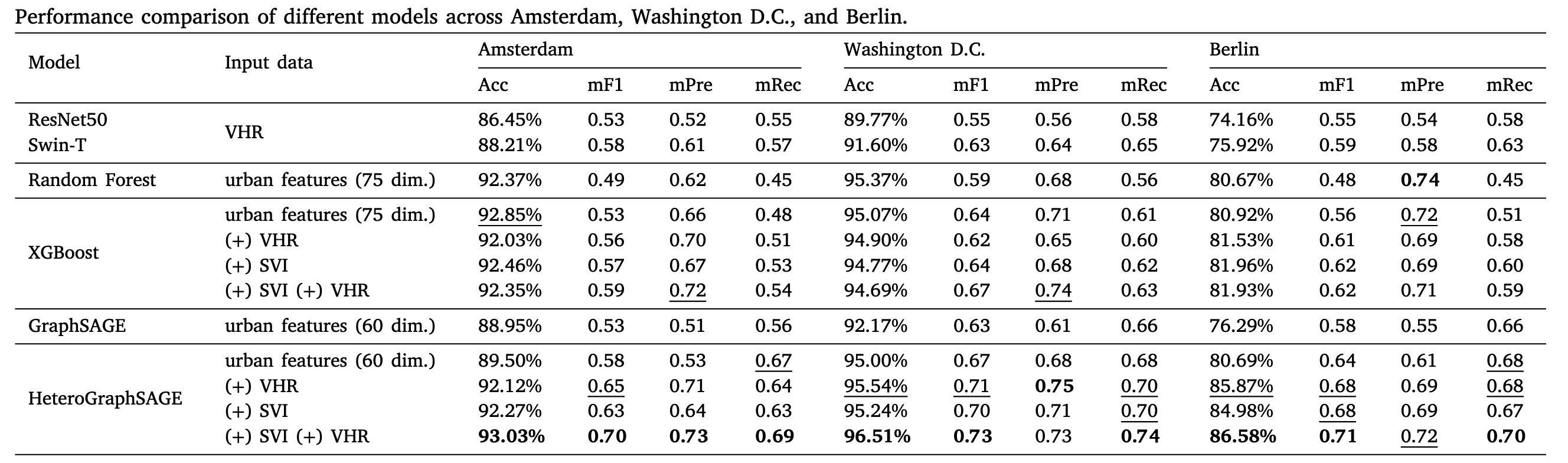

Paper: Heterogeneous graph neural networks for building attribute prediction from hierarchical urban features and cross-view imagery

Abstract

Related Publications

Related Posts

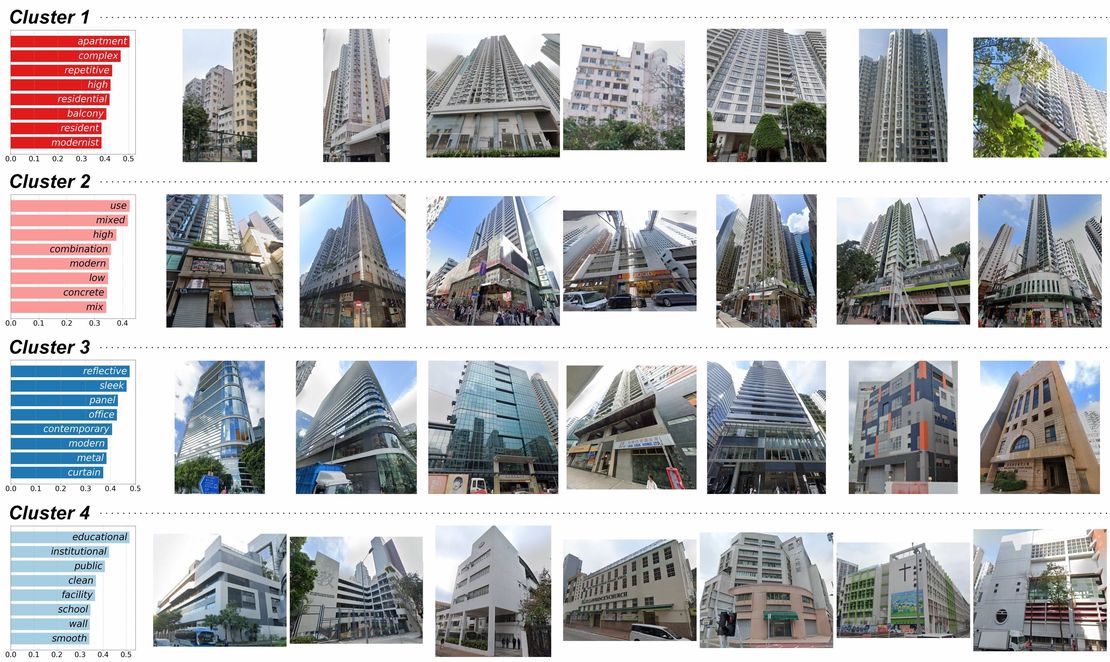

Paper: Decoding characteristics of building facades using street view imagery and vision-language model

This study leverages street view imagery and vision-language models to analyze 48,752 building images in Hong Kong, identifying eight building clusters. It demonstrates the potential of scalable SVI-based analyses to capture urban spatial and semantic details, enhancing the understanding of the built environment.

Read More

Paper: Revealing spatio-temporal evolution of urban visual environments with street view imagery

This study presents an embedding-driven clustering approach that combines physical and perceptual attributes to analyze the spatial structure and spatio-temporal evolution of urban visual environments. Using Singapore as a case study, it leverages street view imagery and graph neural networks to classify streetscapes into six clusters, revealing changes over the past decade. The findings provide insights into urban visual dynamics, supporting planning and landscape improvement.

Read More

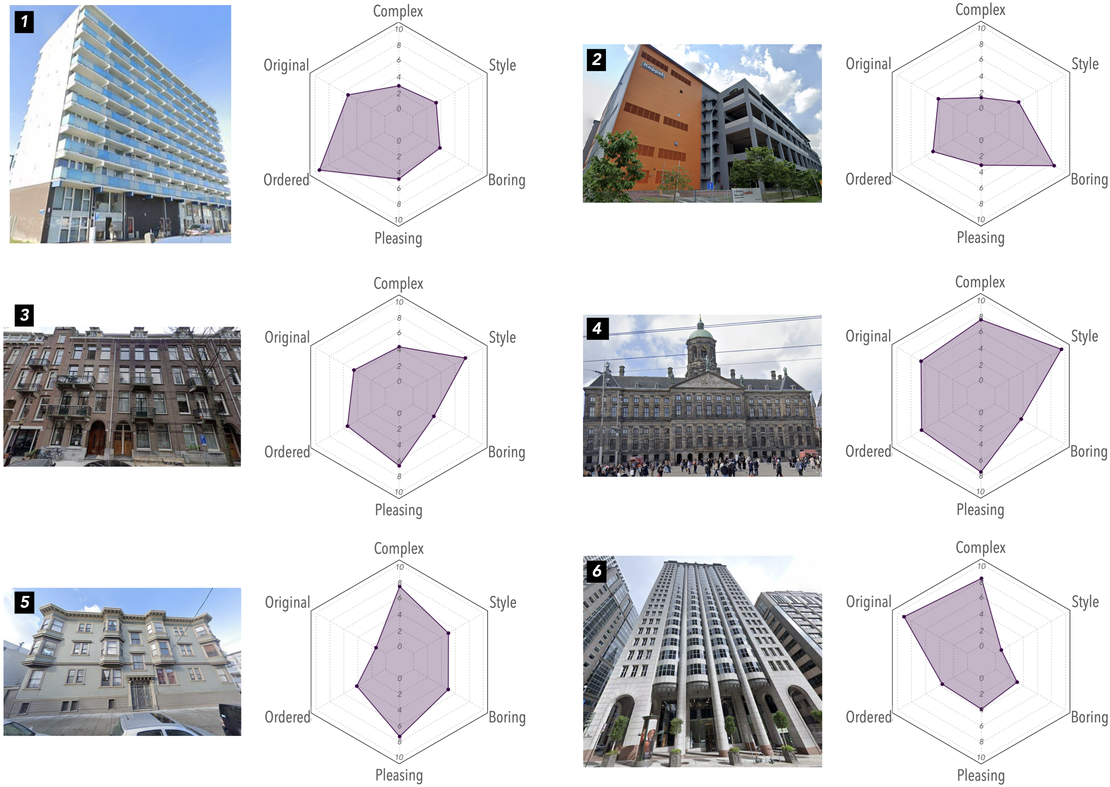

Paper: Evaluating human perception of building exteriors using street view imagery

This study explores how building appearances shape urban perception, using machine learning and survey data to analyze human responses to over 250,000 building images from Singapore, San Francisco, and Amsterdam. Findings reveal how architectural styles influence streetscape perceptions, offering insights for architects and city planners.

Read More